Real-world HAR systems frequently encounter activities not

present in the knowledge base. Traditional deep learning

classifiers extend the label space in a binary way, sending all

out-of-distribution inputs to a single "unknown"

class—collapsing diverse forms of novelty into one

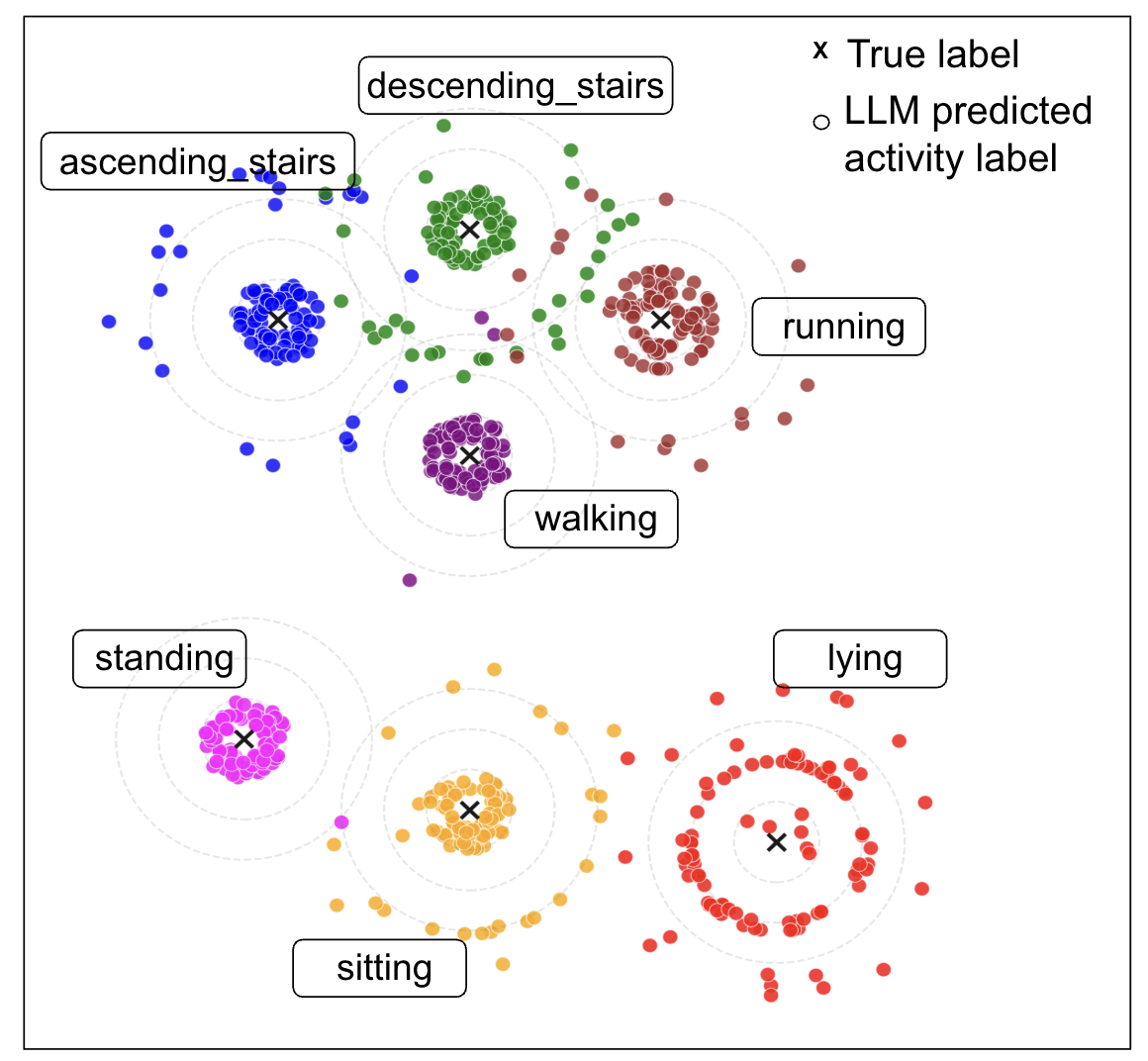

undifferentiated bucket. RAG-HAR addresses this through

open-set classification, leveraging LLM

reasoning to detect, reject, and even

generate meaningful labels for previously unseen

activities.

Open-Set Detection

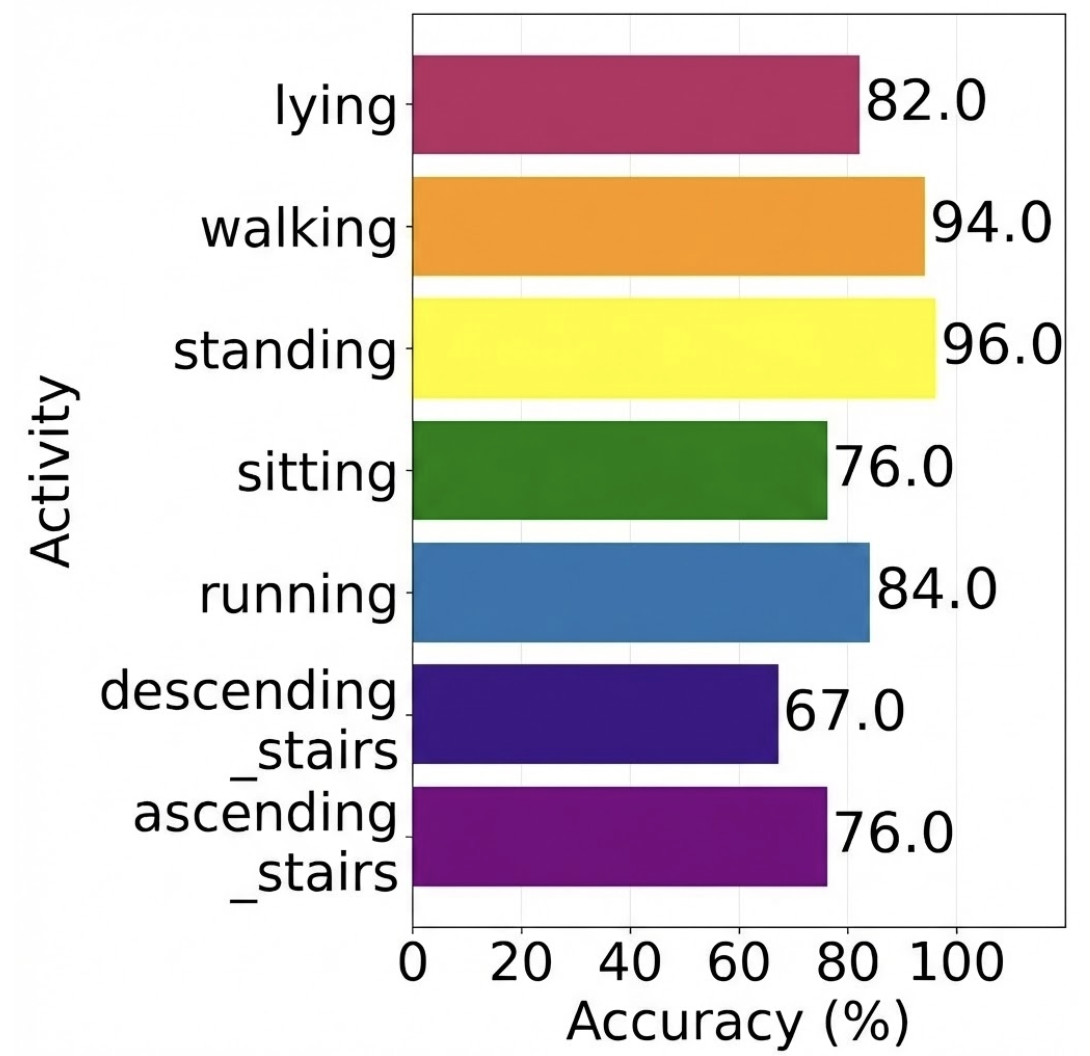

We evaluate open-set classification on the PAMAP2 dataset under

three openness conditions (30%, 50%, and 70% of activities held

out as unknown). RAG-HAR consistently outperforms baseline

methods, demonstrating strong performance in both lower and

higher openness settings.

Impact of Label Availability

Using a leave-one-class-out protocol, we investigate how label

availability affects unseen activity recognition. When the true

label is available in the prompt (as a semantic anchor),

accuracy reaches 78.0%. When replaced with a

generic "unseen activity" placeholder, accuracy drops to

63.2%—a ~15% decline. This reveals that LLMs

can leverage semantic knowledge embedded in labels to improve

unknown activity detection.

Labeling Unseen Activities

Beyond detection, RAG-HAR can

generate meaningful labels

for novel activities—a capability unique to LLM-based

approaches. The LLM generates a label, which is then mapped to

the closest ground-truth class via cosine similarity.

Unlike conventional DL models that collapse all unknowns into a

single undifferentiated class, RAG-HAR leverages pretrained

semantic knowledge to assign fine-grained, human-interpretable

labels—effectively partitioning the unknown space into

meaningful subcategories. This capability is critical for

deploying HAR in healthcare monitoring, elderly care, and

safety-critical applications where encountering new activities

is inevitable.